Building Trustworthy Systems in an Age of Autonomous Software

The software that is being built today does more than automate workflows. It recommends, predicts, decides and increasingly, it acts. From AI copilots drafting contracts to autonomous systems triggering financial transactions, modern enterprises are entering an era where software executes on the behalf of humans. As autonomy rises, so does a fundamental question of whether the systems that are being built can be trusted?

The Rise of Autonomous Software

Autonomous software systems combine AI, real-time data processing, and automated execution engines to perform tasks with minimal human intervention. Examples include that of AI-driven procurement approvals, self-healing IT infrastructure, intelligent fraud detection systems, autonomous supply chain optimization, and Generative AI assistants integrated into enterprise workflows.

With advances popularized by organizations like OpenAI and large-scale AI adoption driven by companies such as Microsoft and Google, enterprise-grade autonomy has become fully operational. But autonomy introduces risk vectors that traditional IT governance models were never designed to handle.

Trust Is the New Digital Currency

In such an environment, trust cannot be assumed. Trust must be engineered in this case.

What Makes a System Trustworthy?

Building trustworthy autonomous systems requires deliberate design across five pillars

- Transparency by Design– Autonomous systems must be explainable.

- Why was a decision made?

- What data influenced it?

- Can outcomes be audited?

Black-box intelligence is unacceptable in regulated environments. Explainability layers, traceable data pipelines, and model documentation must be embedded into architecture.

- Human-in-the-Loop Governance- Autonomy should elevate oversight.

Organizations need escalation thresholds, override mechanisms, role-based access controls, and clear human accountability maps. The future is structured collaboration between humans and machines.

- Ethical Guardrails and Bias Mitigation- Autonomous systems inherit biases from data. Without proper controls, hiring systems may discriminate, credit systems may exclude, or healthcare systems may misdiagnose. Ethical AI frameworks must include bias testing protocols, fairness audits, continuous model retraining, and diverse dataset validation. Trustworthy systems are socially responsible.

- Resilience and Fail-Safe Architecture- Autonomous systems must assume failure scenarios. The main design principles should include circuit breakers, transaction rollback mechanisms, redundancy layers, and continuous monitoring. In an autonomous enterprise, resilience is thus foundational.

- Regulatory Alignment and Data Sovereignty- Governments globally are responding to AI expansion with governance frameworks. For example, the European Union introduced the AI Act to regulate high-risk AI systems, emphasizing transparency and accountability.

Similarly, India has emphasized a human-centric approach to AI through policy discussions at global forums and initiatives like the MANAV governance framework. Enterprises must proactively align with evolving standards instead of reacting post-incident.

The Consultant’s Role in a Trust-First Era

As enterprises push toward autonomy, the challenge is architectural and cultural. Consulting partners must help organizations define risk tolerance boundaries, create AI governance frameworks, integrate compliance into DevOps pipelines, redesign operating models for AI-enabled decision loops, and establish cross-functional AI oversight committees. Trust cannot be retrofitted. It must be architected from day one.

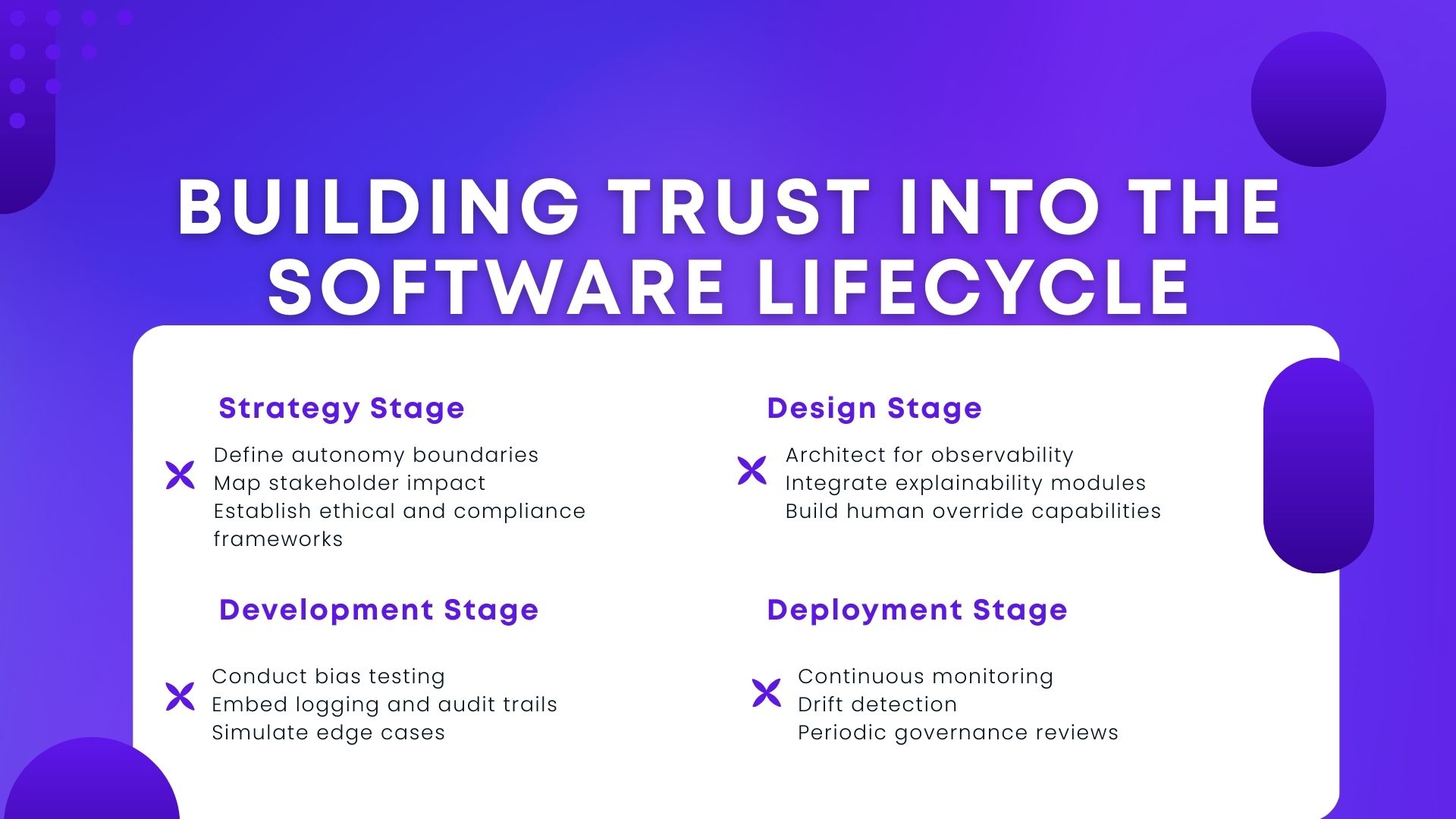

Building Trust into the Software Lifecycle

At PCPL, we believe trustworthy systems are built across the lifecycle

Trust becomes measurable when it is engineered.

Trust as Competitive Advantage

Enterprises are racing to automate faster than competitors to make a mark in the competitive modern market, but the winners will be those who deploy the most reliable AI.

Customers will favor systems that protect their data, provide clarity in decisions, demonstrate fairness, and offer accountability. Investors will reward companies that proactively manage AI risk. Employees will trust systems that augment and not threaten their roles.

Conclusion

Autonomous software is inevitable but untrustworthy systems are not. The enterprises that succeed today will treat trust not as a legal necessity but as an engineering discipline. Because in a world where software makes decisions, trust is the real infrastructure.

If your organization is exploring AI-driven automation, the real question will be how deliberately will you be building trust into what you deploy.

References

https://www.linkedin.com/pulse/building-trust-autonomous-systems-designing-full-ai-andre-8ik1e/