Risk-Aware AI Systems For Modern Businesses

We all have seen the evolution of artificial intelligence from being an experimental capability to an important business enabler. Organizations are now integrating AI into decision-making, operations, customer experience, and even strategic planning. But as adoption accelerates, so do the risks. There are incidents of biased outputs and costly automation errors but every situation does not have the need for AI involvement.

Traditional AI systems are designed with a bias toward action. Whether it’s approving a loan, flagging a transaction, recommending a product, or triggering a workflow, most systems are optimized for output generation. However, in real-world business scenarios, this can lead to false positives and negatives in critical decisions, automation of flawed logic at scale, unintended consequences due to lack of context, and loss of human oversight in high-stakes scenarios.

For example, an AI model trained to detect fraudulent transactions may block legitimate payments if it lacks sufficient contextual understanding. Similarly, a predictive maintenance system might trigger unnecessary shutdowns based on incomplete data, leading to operational losses. The main problem is that these systems lack risk awareness and they act even when they shouldn’t.

What is Risk-Aware AI?

Risk-Aware AI refers to systems that are designed not just to make decisions, but to evaluate the confidence, context, and consequences of those decisions before acting. In simple terms, it means building AI that can say that it is not confident enough to make a particular decision and ask for human intervention. This transforms AI from a decision-maker into a decision-support system with judgment boundaries.

Principles of Risk-Aware AI

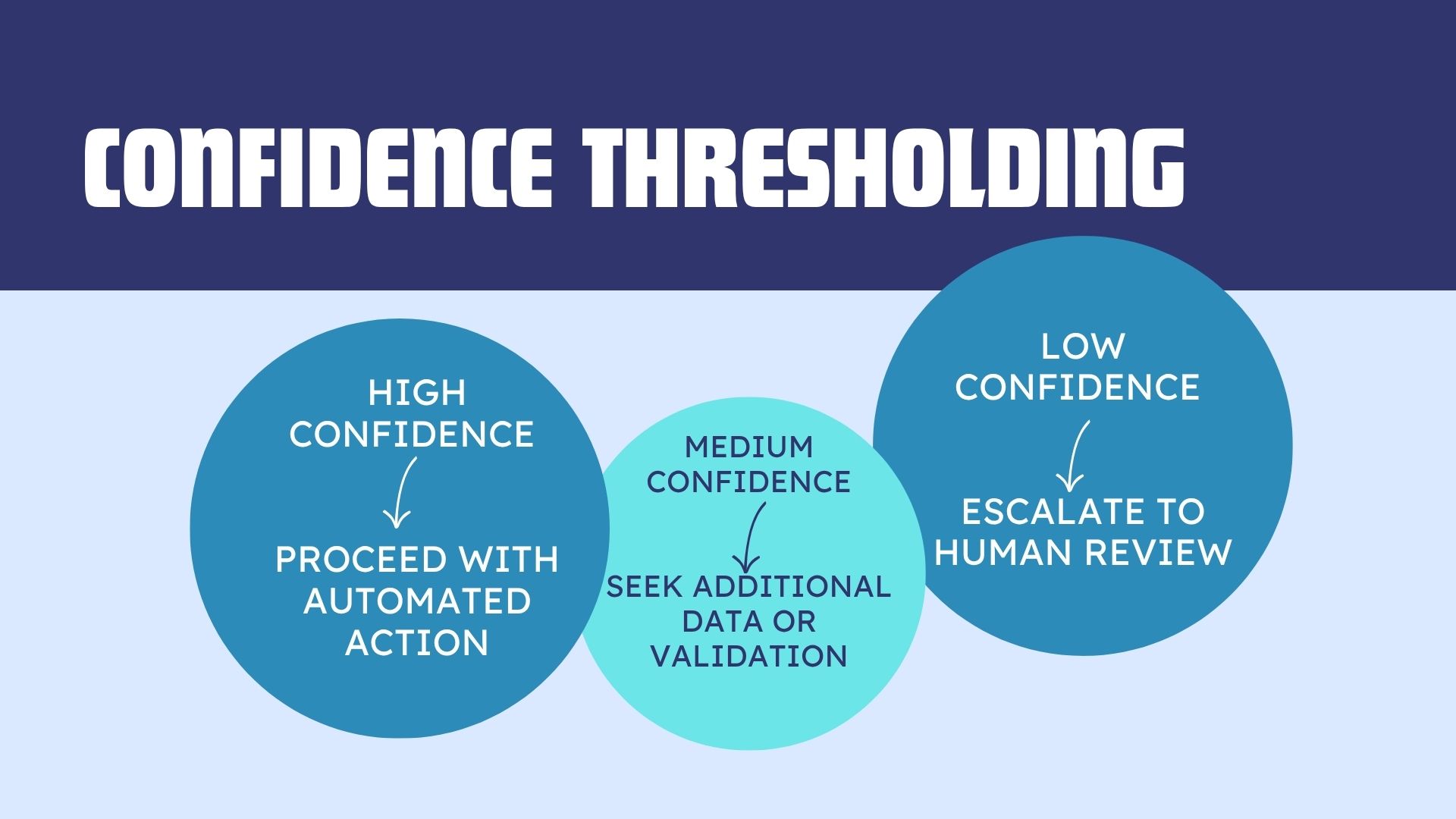

- Confidence Thresholding

Every AI prediction comes with a degree of uncertainty. Risk-aware systems quantify this uncertainty and define thresholds for action.

This prevents blind automation and ensures that decisions align with acceptable risk levels.

- Context Awareness

AI models often operate within limited data scopes. Risk-aware systems incorporate contextual signals such as business rules, regulatory constraints, historical anomalies, and environmental factors. For example, a supply chain AI might delay automated procurement decisions during geopolitical disruptions, even if demand forecasts suggest otherwise.

- Human-in-the-Loop Design

Instead of replacing humans, risk-aware AI integrates them at important decision points. This includes approval workflows for high-risk actions, override mechanisms for edge cases, and continuous feedback loops to improve model performance. The goal is not to ensure that speed does not compromise accuracy or accountability.

- Fail-Safe Mechanisms

What happens when AI systems fail? Risk-aware AI includes built-in safeguards such as default fallback actions, graceful degradation of functionality, and alert systems for anomalies. For example, in financial systems, if an AI model fails to assess credit risk, the system might revert to rule-based evaluation instead of making an uninformed decision.

- Explainability and Transparency

Understanding why an AI system made or avoided a decision is important for trust. Risk-aware systems provide decision explanations, confidence scores, and audit trails. This is especially important in regulated industries like healthcare, finance, and insurance.

Designing AI Systems That Know When Not to Act

Building risk-aware AI requires a shift in design philosophy. It requires rethinking system architecture.

- Identify High-Risk Decision Points- Not all decisions carry equal risk. Start by mapping financial impact, customer experience implications, and compliance requirements. Focus risk-aware design on areas where errors are costly or irreversible.

- Define Risk Boundaries- Establish clear criteria for when AI can act autonomously, when human intervention is required, and when decisions should be deferred. These boundaries should be aligned with business objectives and risk appetite.

- Integrate Multi-Layer Validation– Instead of relying on a single model, use ensemble models, rule-based checks, and external data validation. This reduces the likelihood of erroneous actions.

- Build Feedback Loops– Risk-aware systems learn not just from success, but from hesitation. Track escalated decisions, human overrides, and edge-case scenarios. Use this data to continuously refine thresholds and improve model reliability.

- Monitor in Real-Time– Deploy monitoring systems that track model drift, prediction confidence trends, and anomalous behavior. This ensures that AI systems remain aligned with real-world conditions.

Applications of Risk-Aware AI

- Financial Services

In banking and fintech, risk-aware AI can prevent fraudulent transactions without blocking legitimate ones, escalate ambiguous cases for manual review, and ensure compliance with evolving regulations.

- Healthcare

AI-assisted diagnosis systems can provide recommendations with confidence levels, flag uncertain cases for specialist review, and avoid over-reliance on automated decisions.

- Supply Chain and Logistics

Risk-aware AI can delay automated decisions during disruptions, incorporate real-time external data like weather, geopolitical events, and optimize operations without compromising resilience.

- Customer Experience

Chatbots and recommendation engines can escalate complex queries to human agents, avoid inappropriate or irrelevant suggestions, and maintain brand trust.

The Cost of Ignoring Risk Awareness

Organizations that deploy AI without risk-awareness often face reputational damage, regulatory penalties, financial losses, and erosion of customer trust. In contrast, risk-aware AI builds resilience, trust, and long-term value.

PCPL Helps in Risk-Aware AI Adoption

We understand that AI is a business risk decision. We help organizations identify where AI should and should not be applied, ensuring alignment with business goals and risk tolerance. We design AI architectures that incorporate confidence scoring, decision thresholds, and human-in-the-loop workflows. We embed risk management into AI systems through auditability, explainability, and regulatory alignment. AI systems are not static and this is why we ensure ongoing performance through real-time monitoring, feedback integration, and model recalibration.

The Future Is for Responsible Intelligence

As AI continues to evolve, the conversation is shifting from capability to responsibility. The most successful organizations will be the ones that automate intelligently. Risk-aware AI represents an important step in this journey. It ensures that systems are powerful as well as prudent, thoughtful and wise.

Data and speed drives everything today and restraint often seems counterintuitive. But in AI, knowing when not to act can be more valuable than acting quickly. Designing systems with this mindset requires foresight, discipline, and the right partner.

PCPL helps businesses move beyond experimental AI adoption to responsible, risk-aware intelligence, where every decision is accountable.

References

https://www.sciencedirect.com/science/article/abs/pii/S0167923622000719